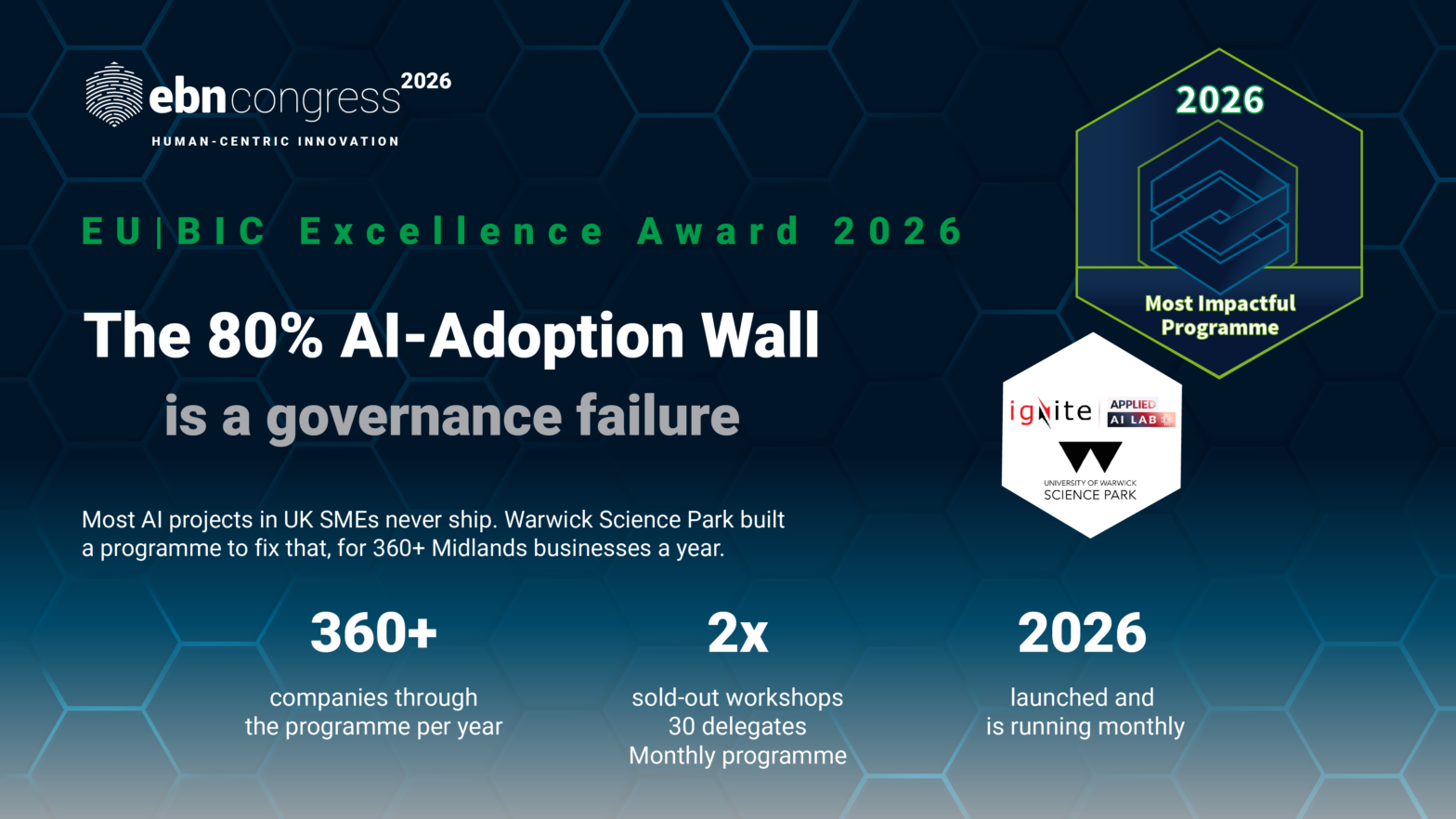

University of Warwick: 2026 EU|BIC Award finalist

Most organisations experimenting with AI never get their tools into production. The demos work. The real-world deployments do not. The reasons are usually the same: the AI starts giving unreliable outputs over time, there is no clear process for checking whether its decisions are correct, the data it handles may not be stored or processed in a legally compliant way, and practical knowledge for safe and effective deployment is lacking. EU|BIC University of Warwick Science Park (UWSP) identified this pattern across its ecosystem and, in 2026, built a programme to break through it.

What the Ignite Applied AI Lab offers

The Ignite Applied AI Lab gives businesses a structured environment to build AI tools the right way from the start. The programme allows businesses to build AI that stays within guardrails, can be checked, and leaves a paper trail. They do it by building and deploying a real AI product during a workshop. By the end, they leave with something that actually works in the real world. Participants deploy AI directly into a real business workflow, ensuring it operates safely, compliantly, and reliably under real operating conditions rather than in a closed sandbox.

The programme is built on three layers. The first layer is AI governance structures: rules and processes that define how AI should be used responsibly, who is accountable for an AI decision, how to verify AI is doing what it should, and what happens when it goes wrong. The second layer is the Applied AI Lab itself. It is the hands-on workshop where businesses actually build and deploy their AI tools within those rules. Each engagement starts from a concrete business problem defined by the participant, ensuring AI is applied only where it delivers operational value. The third layer is the locally hosted sovereign AI infrastructure. This means that the AI runs on computers physically located at UWSP, rather than on servers owned by big tech companies. This matters for businesses in regulated sectors like healthcare or finance, where data cannot be sent outside the organisation or stored on third-party servers.

The governance-first design makes the programme distinctive. It frames AI as a governed infrastructure where outputs are validated, and execution is controlled. Crucially, human oversight is embedded by design. AI outputs are reviewed, decisions can be challenged or escalated, and accountability remains clearly with the organisation, not the system. This is operationalised through four architectural principles:

- Governed components: AI is treated as a piece of infrastructure with clear rules, not a free-running tool that makes decisions on its own.

- Persistent context mechanisms for reliability: AI remembers relevant information consistently across interactions, so it does not contradict itself or lose track of what it is supposed to be doing.

- Validation pipelines: there is a checking process that reviews what the AI produces before it is acted upon, so errors or bad outputs are caught.

- Controlled execution environments that ensure auditability: AI operates within defined boundaries, and a record is kept of everything it does, so you can trace back any decision or output.

Participants learn to embed transparency and bias mitigation into their systems from the start, in line with the EU AI Act, GDPR, and international responsible AI principles, including the Bletchley Declaration. A part of the programme is powered by FoxlitX, a novel AI platform that enables hands-on deployment training in production-grade conditions. The Lab is delivered through a modular, partner‑based model, avoiding vendor lock‑in and enabling the ability for EU|BICs to adapt the approach to local capabilities, sectors, and regulatory contexts.

A unique offer by EU|BICs

EU|BICs are uniquely placed to offer this, because they are neutral. They are not trying to sell a particular AI product or have any financial interest in a specific solution. As a neutral intermediary, it connects SMEs, universities, industry partners, and regulators around a shared governance infrastructure, making responsible AI adoption accessible to SMEs that could never afford to build this capability on their own.

This role is an organic innovation to how EU|BICs operate. UWSP is also planning the launch of an Applied AI Alliance, an initiative designed to establish itself as the leading voice on AI governance and regulation in the UK, bringing together the ecosystem actors needed to shape responsible adoption at scale.

The replicability is therefore a strong possibility. The AI deployment gap is not specific to the UK. SMEs across Europe face the same barriers: limited expertise, regulatory uncertainty, and no safe environment in which to move from experiment to operation. The programme’s modular structure does not require a specific technical stack. It requires governance, oversight, and an EU|BIC willing to act as the trusted intermediary its ecosystem needs. Any EU|BIC with that ambition can adapt this model to its own regional priorities and sector mix. In doing so, it positions itself as a regional platform for responsible technological development while building a sustainable commercial income stream. Beyond individual deployments, the model builds repeatable regional AI capability, enabling SMEs to progress confidently from experimentation to governed production over time.

How the programme is delivered

The programme is delivered through monthly workshops, each capped at 30 participants. Participants are drawn from UWSP’s existing support programmes, as well as attracting external companies. All workshops sold out immediately. Over a full year of monthly delivery, the programme is projected to reach over 360 companies from the region. In addition, the programme offers regular clinics and drop-in sessions for companies that require more personalised input from our pool of AI experts. Beyond the direct impact on participants, the model has a commercial dimension: it can generate revenue while strengthening EU|BIC’s support offer. The programme is still too young for robust impact data, but the demand signal is already clear.

The beneficiaries span the full range of an innovation ecosystem. Primary beneficiaries are SMEs, startups, and entrepreneurs. Secondary beneficiaries include corporates requiring validated, secure AI environments, public sector, and regulated sector organisations needing sovereign data protection, and regional communities that benefit from increased AI adoption done responsibly.

University of Warwick Science Park will pitch the Ignite Applied AI Lab on stage at EBN Congress 2026 in Trentino on 18 June. The audience votes for the winner.